The surface code: how you protect a qubit with 1,000 other qubits

Error correction is the biggest engineering challenge in quantum computing. The surface code is the leading solution — here's how it works, without the stabilizer math.

If you’ve read about why qubits keep breaking, you know the problem: quantum states are destroyed by noise within microseconds. Error correction is the solution — but quantum error correction is dramatically harder than the classical version.

The surface code is the leading approach to this problem. Here’s how it works.

Why classical error correction doesn’t work

Your laptop uses error correction constantly. The basic idea is simple: make copies. Store three copies of each bit. If one flips, the other two outvote it.

Quantum computing breaks this approach in two ways:

You can’t copy a qubit. This isn’t an engineering limitation — it’s a law of physics. The “no-cloning theorem” proves you can’t make an exact copy of an unknown quantum state. So the “store three copies” strategy is fundamentally impossible.

Measuring destroys the state. In classical error correction, you can check a bit’s value anytime. With qubits, measurement collapses the state — the act of checking destroys the information you’re trying to protect.

So quantum error correction has to detect errors without looking at the data and fix them without making copies. This sounds impossible, but it’s not.

The trick: check the neighbours, not the data

Imagine you have a secret message encoded across a group of people. You can’t ask anyone what their letter is (that would reveal the message). But you can ask pairs of neighbours: “Do you two agree?” If they say yes, great. If they say no, something went wrong near them.

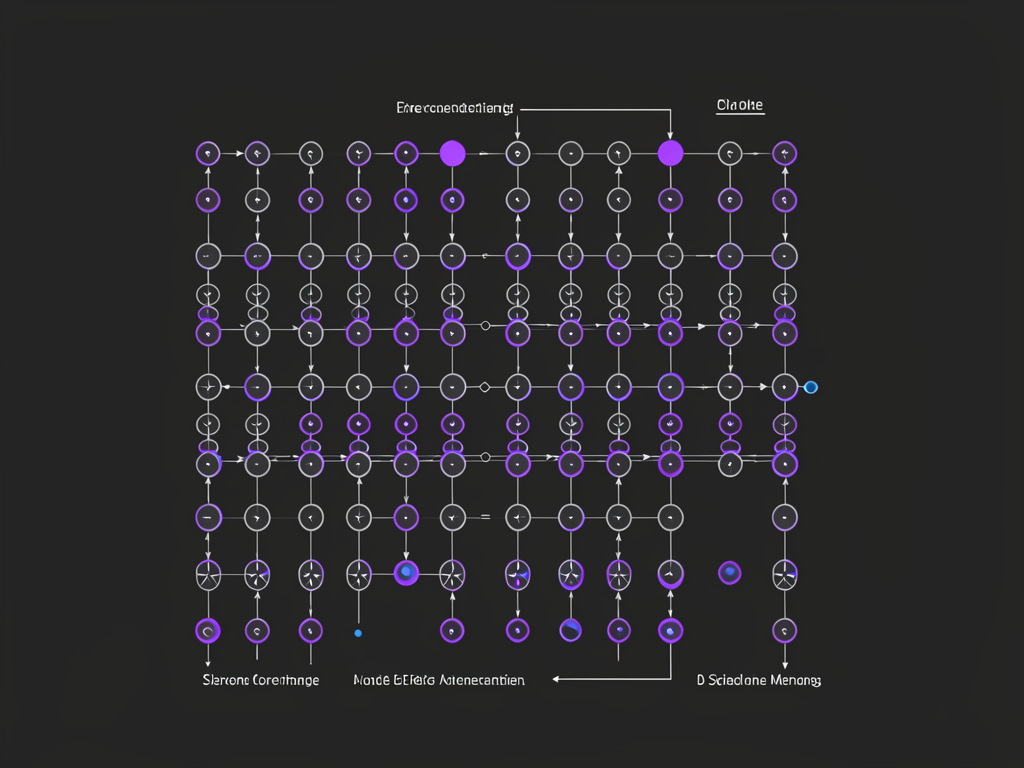

This is roughly how the surface code works. You arrange qubits in a 2D grid — like a checkerboard. Some qubits hold the actual data. Others are “check qubits” whose only job is to measure the agreement between their neighbours.

The check qubits don’t learn what value the data qubits hold — they only learn whether neighbouring data qubits are consistent. This is the key insight: you detect errors without measuring the actual information.

What happens when an error is detected

The pattern of “agree/disagree” results from all the check qubits is called the syndrome. It’s like a map of where things look suspicious.

A classical computer (running alongside the quantum computer) takes this syndrome and figures out what probably went wrong. This is called decoding, and it needs to happen fast — within the time it takes for the next error to occur.

The decoder doesn’t need to know the exact error. It just needs to know enough to correct it. If it guesses right (which it usually does, if errors are rare enough), the computation continues as if nothing happened.

The distance knob

The surface code has a parameter called distance (usually written as d). Bigger distance means:

- More physical qubits per logical qubit (roughly d² data qubits)

- Exponentially better error protection

- More overhead

At distance 3 (the smallest useful size), you need about 17 physical qubits to protect one logical qubit. At distance 5, about 49. At distance 21 (what you’d need for serious computations), about 800+.

This is why the numbers are so daunting: a useful quantum computer needs thousands of logical qubits, each requiring hundreds or thousands of physical qubits.

Why the surface code specifically?

There are many error correction codes. The surface code won the popularity contest because:

It works with nearest-neighbour connections. Each qubit only needs to talk to its immediate neighbours on the grid. This matches how most quantum chips are actually built — especially superconducting qubits, which naturally sit in 2D grids.

It has a high error threshold. The surface code can tolerate physical error rates up to about 1% per operation. Below that threshold, adding more qubits makes things better. Above it, adding more qubits makes things worse. Current hardware is near or below this threshold.

The decoder problem is well-studied. Decades of research have produced efficient decoding algorithms. This matters because decoding needs to happen in real-time during computation.

The catch: overhead

The surface code’s weakness is the sheer number of physical qubits required. At current error rates:

- 1 logical qubit ≈ 1,000 physical qubits (rough estimate)

- A useful quantum algorithm might need 2,000-10,000 logical qubits

- That’s 2-10 million physical qubits

Today’s biggest quantum computers have about 1,100 physical qubits. So we’re roughly 1,000× short of what’s needed for the most ambitious applications.

This is the central tension in quantum computing: the error correction works in theory, the hardware is improving, but the scale gap is enormous.

What “logical qubit” actually means

When a company announces “we demonstrated a logical qubit,” check what they mean:

- Did the logical qubit have a lower error rate than the physical qubits? This is the key test. If encoding makes things worse, the error correction isn’t actually helping.

- Was it just one cycle, or sustained operation? Running error correction for one round is easier than running it continuously.

- What distance? Distance 3 is a demonstration. Distance 7+ is where it starts being useful.

In 2024-2025, several groups (Google, Microsoft + Atom Computing, Quantinuum) demonstrated logical qubits where error correction actually helped — error rates went down as the code distance increased. This is a genuine milestone: it means the principle works on real hardware.

The honest summary

- The surface code encodes one logical qubit across many physical qubits in a 2D grid

- Check qubits detect errors without measuring the actual data

- A classical decoder figures out what went wrong and how to fix it

- The overhead is roughly 1,000 physical qubits per logical qubit at current error rates

- The surface code is popular because it matches real hardware constraints

- We’ve proven it works in principle; the challenge is scaling it up by ~1,000×

What’s next?

Understanding error correction leads naturally to understanding how quantum circuits get compiled down to actual hardware operations — and why that compilation step matters more than most people realise.